Archive

Dropbox waits almost six months to fix a flaw that probably took less than a day

Graham Cluley is a much respected security expert – but we don’t always agree. Full disclosure – the early public disclosure of a vulnerability whether or not the vendor has a fix available – is an example.

I believe that vendors should be notified when a flaw is discovered, and then given 7 days to fix it. After that, whether the fix has been made or not, the flaw should be made public.

Graham does not believe a flaw should ever be made public before the fix is ready. When I asked him, back in March this year, “What if the vendor does nothing or takes a ridiculously long time to fix it?…”

Graham sticks to his basic principle. You still don’t go public. Instead, you could, for example, go to the press “and demonstrate the flaw to them (to apply pressure to the vendor) rather than make the intimate details of how to exploit a weakness public.”

Phoenix-like, Full Disclosure returns

This is exactly what happened with the newly disclosed and fixed Dropbox vulnerability. This flaw (not in the coding, but in the way the system works) allowed third parties to view privately shared, and sometimes confidential and sensitive documents. There were two separate but related problems. The first would occur if a user put a shared URL into a search box rather than the browser URL box. In this instance, the owner of the search engine would receive the shared link as part of the referring URL.

This is exactly what happened with the newly disclosed and fixed Dropbox vulnerability. This flaw (not in the coding, but in the way the system works) allowed third parties to view privately shared, and sometimes confidential and sensitive documents. There were two separate but related problems. The first would occur if a user put a shared URL into a search box rather than the browser URL box. In this instance, the owner of the search engine would receive the shared link as part of the referring URL.

The second problem occurred

if a document stored on Dropbox contains a clickable link to a third-party site, guess what happens if someone clicks on the link within Dropbox’s web-based preview of the document?

The Dropbox Share Link to that document will be included in the referring URL sent to the third-party site.

On 5 May 2014, Dropbox blogged:

We wanted to let you know about a web vulnerability that impacted shared links to files containing hyperlinks. We’ve taken steps to address this issue and you don’t need to take any further action.

Web vulnerability affecting shared links

On 6 May 2014 (actually on the same day if you take time differences into account), IntraLinks (who ‘found’ the flaw), the BBC and Graham Cluley all wrote about it:

- IntraLinks: Your Sensitive Information Could Be at Risk: File Sync and Share Security Issue

- BBC: Warning over unintentional file leak from storage sites

- Graham Cluley: Dropbox users leak tax returns, mortgage applications and more

But each of them talk as if they had prior knowledge of the issue and at greater depth than that revealed by Dropbox. So what exactly is the history of this disclosure?

From the IntraLinks blog we learn:

We notified Dropbox about this issue when we first uncovered files, back in November 2013, to give them time to respond and deal with the problem. They sent a short response saying, “we do not believe this is a vulnerability.”

So for almost six months Dropbox knew about this flaw but did nothing about it. Graham explained by email how it came to a head, and Dropbox was forced to respond:

Intralinks told Dropbox and Box back in November last year.

Intralinks told me a few weeks ago. My advice was to get a big media outlet interested. They went to the BBC.

The BBC spoke to me on Monday (the 5th) and contacted Dropbox. The BBC were due to publish their story that day, but Dropbox convinced them to wait until the following day (presumably they were responding).

Dropbox then published their blog in the hours before the BBC and I published our articles (Tuesday morning).

This seems to be the perfect vindication of Graham’s preferred disclosure route: use the media to force the vendor’s hand before public disclosure of a vulnerability.

But just to keep the argument going, it also vindicates my own position. Dropbox users were exposed to this vulnerability for more than four months longer than they need have been. There is simply no way of knowing whether criminals were already aware of and using the flaw, and we consequently have no way of knowing how many Dropbox users may have had sensitive information compromised during those four months. After all, the NSA knew about Heartbleed, and were most likely using it, for two years before it was disclosed and fixed.

MS issues out-of-band patch as IE attacks increase

FireEye reported last week (26 Apr 2014) on a newly discovered Internet Explorer vulnerability that is already being exploited in the wild. The vulnerability affects all IE versions from 6 through 11; but was at the time only being exploited in version 9-11 in Win 7 and 8.

Two things have since happened. Firstly the attacks have widened. FireEye reported May 1 on

a newly uncovered version of the attack that specifically targets out-of-life Windows XP machines running IE 8. This means that live attacks exploiting CVE-2014-1776 are now occurring against users of IE 8 through 11 and Windows XP, 7 and 8.

“Operation Clandestine Fox” Now Attacking Windows XP Using Recently Discovered IE Vulnerability

To make this worse, FireEye also noted that multiple actors are now involved in these attacks:

…new threat actors are now using the exploit in attacks and have expanded the industries they are targeting. In addition to previously observed attacks against the Defense and Financial sectors, organization in the Government- and Energy-sector are now also facing attack.

The second new development is that Microsoft has reacted with remarkable speed, and has already released an out-of-band patch for the vulnerability. Users with automatic updates should not need to do anything – all others should make sure that they avail themselves of this update as soon as possible (details here). Interestingly, even though XP is no longer supported, an XP fix is included.

Jerome Segura, senior security researcher at Malwarebytes

(As an aside, I find this an interesting situation. Microsoft will be continuing to support XP for private customers – such as the UK government. It will therefore have the fixes. So, does Microsoft ignore the rest of the XP market even though it can keep it safe, and even though compromised unsupported XP systems could be used to attack the critical infrastructure? Jerome Segura, senior security researcher at Malwarebytes, thinks not. “Microsoft’s decision to patch XP through the automatic update channels may shoot itself in the foot by encouraging users to stick with it awhile longer,” he suggests. “Offering support for Windows XP should really be a last resort scenario because this is an aging operating system that does not meet today’s security and performance standards.”)

I have two questions on the latest developments: why do zero-day vulnerabilities spread to multiple actors so quickly; and is there an added threat from the vast numbers of unpatched, pirated and subsequently compromised XP computers. I asked FireEye’s threat intelligence manager, Darien Kindlund, for his views on these.

Darien Kindlund, threat intelligence manager at FireEye

His answer to the latter is relatively simple: we don’t know. “We know that the number of pirated copies of Windows XP is still quite large; however, we do not have updated statistics on legal vs. pirated copies,” he said.

Although pirated software can still get Microsoft’s security patches, it is quite likely that the pirates will avoid doing so for fear of being discovered. So even if Microsoft continues to release security patches for XP, good people who don’t patch and bad people who won’t patch will leave potentially millions of XP targets that could be turned to the dark side.

On the spread of 0-day attacks I wondered if the original bad actors sell on the vulnerability to other groups once the attacks have been discovered. The initial targets in this instance (defence and finance) could suggest organized crime if not state-affiliated attackers. Such targets could be expected to patch rapidly – so the value of the vulnerability would quickly lessen once its use is discovered and mitigation steps are put in place. Selling on to other actors would maximize the financial return from it when it becomes less effective.

Kindlund, however, offered a simpler explanation. “It is believed,” he said, “the original threat group using this vulnerability passed the exploit onto other groups, in order to make it harder for attribution analysis.”

But this all leaves one major problem for users. This vulnerability was in active use before it was discovered by the good guys. Then followed a period in which mitigation steps were available, but no formal patch. Now we are in the period in which sys admins will be trying to schedule in their updates, and wondering just how urgent it might be. The question is, however, how many users have already been unknowingly compromised?

Cisco has come up with some help. It has analysed an exploit and found a selection of attack indicators.

Due to active exploitation uncovered among our customer base, we are releasing the following indicators about the exploit so that anyone can investigate their own environments and protect themselves:

We’ve associated the following subjects with this campaign so far:

- Welcome to Projectmates!

- Refinance Report

- What’s ahead for Senior Care M&A

- UPDATED GALLERY for 2014 Calendar Submissions

Associated domains so far:

- profile.sweeneyphotos.com

- web.neonbilisim.com

- web.usamultimeters.com

- inform.bedircati.com

Sys admins should therefore look to their logs. If they find any of these indicators, they have been attacked and may already be compromised. Either way, the patch should be applied as early as is feasible.

New Android flaw could send you to a phishing site

Last week news of the Heartbleed bug broke. Initial concern concentrated on the big service providers and whether they were bleeding their users’ credentials, but attention soon turned to client devices, and in particular Android. Google said only one version of Android was vulnerable (4.11 Jelly Bean); but it’s the one that is used on more than one-third of all Android devices.

The problem is, Android simply won’t be patched as fast as the big providers. Google itself is good at patching; but Android is fragmented across multiple manufacturers who are themselves responsible for patching their users – and historically, they are not so good. It prompted ZDNet to write yesterday,

The Heartbleed scenario does raise the question of the speed of patching and upgrading on Android. Take for instance, the example of the Samsung Galaxy S4, released this time last year, it has taken nine months from the July 2013 release of Jelly Bean 4.3 for devices on Australia’s Vodafone network to receive the update, it took a week for Nexus devices to receive the update.

Heartboned: Why Google needs to reclaim Android updates

Today we get further evidence of the need for Google to take control of Android updating – information from FireEye on a new and very dangerous Android flaw. In a nutshell, a malicious app can manipulate other icons.

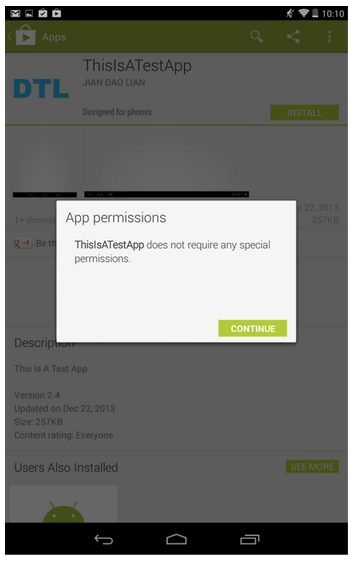

FireEye mobile security researchers have discovered a new Android security issue: a malicious app with normal protection level permissions can probe icons on Android home screen and modify them to point to phishing websites or the malicious app itself without notifying the user. Google has acknowledged this issue and released the patch to its OEM partners.

Occupy Your Icons Silently on Android

The danger, however, is this can be done without any warning. Android only notifies users when an app requires ‘dangerous’ permissions. This flaw, however, makes use of normal permissions; and Android does not warn on normal permissions. The effect is that an apparently benign app can have dangerous consequences.

As a proof of concept attack scenario, a malicious app with these two permissions can query/insert/alter the system icon settings and modify legitimate icons of some security-sensitive apps, such as banking apps, to a phishing website. We tested and confirmed this attack on a Nexus 7 device with Android 4.4.2. (Note: The testing website was brought down quickly and nobody else ever connected to it.) Google Play doesn’t prevent this app from being published and there’s no warning when a user downloads and installs it. (Note: We have removed the app from Google Play quickly and nobody else downloaded this app.)

Google has already released a patch for Android, and Nexus users will soon be safe. But others? “Many android vendors were slow to adopt security upgrades. We urge these vendors to patch vulnerabilities more quickly to protect their users,” urges FireEye.

Philips SmartTV susceptible to hack and hijack

A firmware update to the Philips SmartTV delivered last December introduced a vulnerability that leaves it open to hackers. The problem lies in a feature called Miracast. Miracast allows other devices to connect to the TV via wifi.

The problem, however, is that it uses a default hard-coded password that the user cannot change: miracast.

Maltese researchers ReVuln published a video on how to exploit the vulnerability.

In a short associated note, they added,

The impact is that anyone in the range of the TV WiFi adapter can easily connect to it and abuse of all the nice features offered by these SmartTV models like:

- accessing the system and configuration files located on the TV

- accessing the files located on the attached USB devices

- transmitting video, audio and images to the TV

- controlling the TV

- stealing the browser’s cookies for accessing the websites used by the user

In short this vulnerability could provide access to a user’s current email session for anyone within range of the wifi signal. It would also allow pranksters to hijack the TV and play inappropriate content to inappropriate viewers at inappropriate times — or perform phishing scams/adverts direct to the screen.

In reality it will not be difficult for Philips to get rid of the Miracast flaw with another firmware update doing away with the hard-coded fixed password (although a directory traversal flaw also needs to be fixed), but it should serve as a reality check for the future of the internet of things. As more and more devices in both the home and office become interconnected and interdependent, the volume of these vulnerabilities will increase. And with the flaws will come the criminals.

Manufacturers who have never had to consider infosecurity in the past, must now start considering it at the design phase. “What these vendors do not realise,” said Lancope CTO, TK Keanini in an emailed comment, “is that if they don’t build in automatic updating they are not going to succeed and worse, they will be making their consumers’ networks more insecure as updating and patching these flaws post purchase is incredibly difficult, even for the most tech savvy household. The first vendor to deliver devices that can automatically update and adapt to the changing threat environment will be the leader.”

Why do we get hacked? A combination of arrogance and denial is one reason

Have you ever wondered why we hear of a new hack every day? Well, here’s one reason – the arrogance and denial of some of our security managers.

A couple of months back I was speaking to Ilia Kolochenko, the CEO of a pentesting firm called High Tech Bridge. I asked him if pentesting was really necessary. Well, he said, just this morning I found flaws in [several high-profile media websites] that could, if cleverly exploited, lead to the complete owning of the networks concerned.

Needless to say I was interested. I asked him if he could find more, and laid down a few conditions to ensure that these weren’t old vulnerabilities that he already knew about. He delivered the goods, and the full story was published in Infosecurity Magazine: Infosecurity Exclusive: Major Media Organizations Still Vulnerable Despite High Profile Hacks.

Before publishing the story, all of the companies were notified and given a period of time to correct the flaws. Here’s a sample of the notifications:

Last week I have accidentally found an XSS vulnerability on your website that allows to steal visitors’ sensitive information (e.g. cookies or browsing history), perform phishing attacks and make many other nasty things… [details of the flaw and proof]

Please forward this information to your IT security team, so they can fix it. They may contact me in case they would need additional information and/or any assistance – I will be glad to help.

In some cases, where no vulnerability reporting address could be found, this or similar was sent to as many addresses as could be found.

Point one. Only one of the companies replied to the notification emails. This company basically said, thank you, fixed it. In reality it was only partly fixed and easily by-passed. So at the time of publishing the story, all of the websites had been contacted and given time to fix the flaw – but none of them had.

Point two. Shortly after publishing the story I received the following comments from one of the featured companies:

However try as I might I have found no-one at xyz inc who has ever heard of or from Mr Kolochenko, or yourselves, regarding any testing of our systems, vulnerabilities found, or in fact comments upon our security. Could you therefore please forward me [a copy of the several emails we had already sent].

Needless to say we did this, including an automated receipt email that proved that xyz inc had been sent and had received the email.

This head of xyz’s security then went on to accuse me of writing an advertorial for Kolochenko. He added,

…the vast majority of reported attacks on media broadcasters and press organisations so far in 2013 have had nothing to do with external attacks on websites or online presence, and the Syrian Electronic Army in particular have never used this attack vector – every one of their successful breaches has been the result of a phishing attack, which Mr Kolochenko’s tools will do nothing whatsoever to obviate.

This, of course, is both wrong and irrelevant – how the SEA’s preference for phishing (which could have been made easier by exploiting this vulnerability anyway) somehow protects xyz inc is beyond me.

The simple fact is this head of security was more concerned with deflecting any blame from himself, denying any vulnerability in his system and accusing me of lacking professional standards than in actually finding and fixing said vulnerability. A little humility and acceptance of help from security researchers might go a long way to making the internet a safer place.

Postscript. Following publication of the article, the websites in question fixed the flaws. As far xyz inc is concerned, Ilia subsequently received a further email:

We have now pushed out a fix for this vulnerability. Thanks very much for bring this to our attention.

Regards

xyz inc

Is it safe to carry on using Dropbox (client vulnerability)? Yes and No: Part IV

Two researchers have found they can exploit the Dropbox client in order to access the user’s cloud storage; and the resulting headlines can seem a bit worrying:

Reverse-Engineering Renders Dropbox Vulnerable

This can’t be good for Dropbox for Business

Researchers Reverse Engineer Dropbox Client

Security Vulnerability Allegedly Discovered in Dropbox Client

The effect of this vulnerability, if exploited, can bypass the Dropbox two-factor authentication and give the attacker full access to the user’s stored files. We must therefore once again ask if it is safe to carry on using Dropbox.

The researchers have developed a fairly generic method for reverse engineering the Python code used for the Dropbox client. This shouldn’t be possible, and is consequently a real achievement. Having gained access to the source code they were able to see how the Dropbox client works.

One of reasons Dropbox is so popular – it has more than 100 million users – is because it is easy to use. Turn on your computer and, voila, it’s there ready and waiting. By reversing the code and finding a way to decrypt it, our researchers also discovered how this ‘ease of use’ actually works.

Following registration with Dropbox, each client is given a unique host_id value that is used for all future log-ons. This is stored, encrypted, in the client – but can be retrieved and decrypted. A second value, host_int, is received from the server at log-on.

In fact, knowing host_id and host_int values that are being used by a Dropbox client is enough to access all data from that particular Dropbox account. host_id can be extracted from the encrypted SQLite database or from the target’s memory using various code injection techniques. host_int can be sniffed from Dropbox LAN sync protocol traffic.

Looking inside the (Drop) box

Thus the client is vulnerable; thus the user’s account is vulnerable.

But is it? Technically, yes. But consider… in order to effect this vulnerability, the attacker must have full access to the user’s Dropbox client. And for that to happen, the attacker must have full access to the user’s computer. In other words, the attacker must have already owned the user’s PC – and once that has happened, nothing is safe.

It’s a technical rather than practical vulnerability – and on its own, it shouldn’t deflect users from using Dropbox (for other reasons not to use Dropbox, see Is it safe to carry on using Dropbox (post Prism)? Yes and No: Part III).

In fairness to the researchers, they did not present their findings as a Dropbox vulnerability. Their paper is called Looking inside the (Drop) box, and it says,

We believe that our biggest contribution is to open up the Dropbox platform to further security analysis and research. Dropbox will / should no longer be a black box.

The authors would like to see an open source Dropbox client that can be continuously peer-reviewed by the world’s security researchers. This is really a paper about reverse engineering Python – that’s the big deal.

Are you vulnerable to the Android master key flaw?

Last week Bluebox Security published details of an Android vulnerability that affects up to 99% of all Android devices. I wrote about it on Infosecurity Magazine here. It’s a code signing flaw that allows attackers to trick the device into accepting an update as an official update even when it isn’t. The fractured nature of the Android market makes it difficult to fix – different manufacturers use different versions of the operating system, and it is likely that some manufacturers won’t bother fixing it all.

The immediate workaround is to avoid side loading. It will be difficult for attackers to use the flaw for a mal-modified app via the Play store. But not – nothing ever is – impossible.

Now Bluebox has come to the rescue with a new free app. It doesn’t negate the flaw, but will help you know if you’ve been done. Firstly, it allows you to check to see if your device has been patched. But, “It will also scan devices to see if there are any malicious apps installed that take advantage of this vulnerability,” writes Jeff Forristal, Bluebox CTO, in a blog posting today.

The app is available from both Google Play and the Amazon AppStore for Android.

Disclosure timeline for vulnerabilities under active attack

This is the headline of a new Google blog: Disclosure timeline for vulnerabilities under active attack. It’s beautiful, and I like to think intentional. On the surface, it simply says that we, Google, are explaining our new timeline for the disclosure of vulnerabilities discovered by our engineers, if they are being actively exploited.

But underneath there is a subtle dig at Microsoft. Microsoft has always demanded a lengthy timeline; and would probably prefer indefinite non-disclosure. Google, however, has always championed a short timeline. It is oh so easy to read this headline as: Microsoft’s disclosure timeline for vulnerabilities is now under active attack by Google.

This new disclosure timeline for actively exploited vulnerabilities is seven days. You cannot fault the logic – with dissidents increasingly targeted by spyware, failure to disclose could potentially be life-threatening. Hell, I would say that it should be a 24 hour timeline. Be that as it may, Google has for now settled on seven days.

And it’s going to be contentious. But here’s the genius. If you’re gonna cause a ruckus, why not get in a sly dig, cloaked in the genius of ambiguous deniability, at the same time?

Is Vupen black hat or white hat?

I was talking to GFI Software about the new patch management module added to their VIPRE Business product – but as so often happens in interesting conversations we got side-tracked. Since patches are often forced by researchers’ vulnerability disclosures, I asked GFI for its position on full vs responsible disclosure. This led to the difference between black hat and white hat researchers: basically, Jong (Jong Purisima, antivirus lab manager) told me, “black hat researchers sell their vulnerabilities for money, while white hat researchers report the vulnerability to help the user be more secure and gain the kudos for the discovery.”

Incidentally, as a vendor, GFI would like a couple of days prior warning before a white hat researcher goes public, but believes that a fortnight is more than reasonable – a refreshing attitude compared to the ‘don’t ever disclose’ hysteria promoted by some vendors.

Anyway, a black hat researcher sells his discoveries to make money. So where does that put Vupen? Vupen is a sort of zero-day broker. It buys or develops zero-day exploits and sells them to governments. We are told it doesn’t sell them to anyone else; but that is pretty difficult to prove or disprove. (Even there, given the US Olympic Games project, and the Stuxnet and Flame episodes, there seems little difference between governments and criminal gangs anyway.)

So that’s the question. Is Vupen black hat or white hat? John said, “technically, they’re black hat.” Mark (Mark Patton, general manager of the Security Business Unit) suggested, “Grey hat? Perhaps dark grey hat?” To me, Vupen is simply a black-as-night hat. Any takers?

News stories on Infosecurity Magazine: 17, 18, 21 and 22 May, 2012

My recent news stories…

You don’t need to be hacked if you give away your credentials

GFI Software highlights the problems of users’ carelessness with their credentials: who needs hacking skills when log-on details are just handed over?

22 May 2012

A new solution for authenticating BYOD

New start-up SaaSID today launches a product at CloudForce London that seeks to solve a pressing and growing problem: the authentication of personal devices to the cloud.

22 May 2012

New HMRC refund phishing scam detected

Every year our tax details are evaluated by HMRC. Every year, a lucky few get tax refunds; and every year, at that time, the scammers come out to take advantage.

22 May 2012

UK government is likely to miss its own cloud targets

G-Cloud is the government strategy to reduce IT expenditure by increasing use of the cloud. It calls for 50% of new spending to be used on cloud services by 2015 – but a new report from VMWare suggests such targets will likely be missed by the public sector.

21 May 2012

New Absinthe 2.0 Apple jailbreak expected this week

The tethered jailbreak for iOS 5.1, Redsn0w, still works on iOS 5.1.1. This week, probably on 25 May, a new untethered jailbreak is likely to be announced at the Hack-in-the-Box conference.

21 May 2012

TeliaSonera sells black boxes to dictators

While the UK awaits details on how the proposed Communications Bill will force service providers to monitor internet and phone metadata, Sweden’s TeliaSonera shows how it could be done by selling black boxes to authoritarian states.

21 May 2012

Understanding the legal problems with DPA

We have known for many years that the EU is not happy with the UK’s implementation of the Data Protection Directive – what we haven’t known is why. This may now change thanks to the persistence of Amberhawk Training Ltd.

18 May 2012

Who attacked WikiLeaks and The Pirate Bay?

This week both the The Pirate Bay and WikiLeaks have been ‘taken down’ by sustained DDoS attacks: TPB for over 24 hours, and Wikileaks for 72. What isn’t known is who is behind the attacks.

18 May 2012

BYOD threatens job security at HP

BYOD isn’t simply a security issue – it’s a job issue. Sales of multi-function smartphones and tablets are reducing demand for traditional PCs; and this is hitting Hewlett Packard.

18 May 2012

25 civil servants reprimanded weekly for data breach

Government databases are full of highly prized and highly sensitive personal information. The upcoming Communications Bill will generate one of the very largest databases. The government says it will not include personal information.

17 May 2012

Vulnerability found in Mobile Spy spyware app

Mobile Spy is covert spyware designed to allow parents to monitor their children’s smartphones, employers to catch time-wasters, and partners to detect cheating spouses. But vulnerabilities mean the covertly spied-upon can become the covert spy.

17 May 2012

Governments make a grab for the internet

Although the internet is officially governed by a bottom-up multi-stakeholder non-governmental model, many governments around the world believe it leaves the US with too much control; and they want things to change.

17 May 2012