Archive

The hypocrisy of the European Union’s Freedom of Expression guidelines

Last week the Council of the EU published the EU Human Rights Guidelines on Freedom of Expression Online and Offline. It is really aimed at non-EU states that show little regard for human rights — but the reality is the EU should look closely at its own behaviour.

Consider just three extracts:

1. Free, diverse and independent media are essential in any society to promote and protect freedom of opinion and expression and other human rights. By facilitating the free flow of information and ideas on matters of general interest, and by ensuring transparency and accountability, independent media constitute one of the cornerstones of a democratic society. Without freedom of expression and freedom of the media, an informed, active and engaged citizenry is impossible… Efforts to protect journalists should not be limited to those formally recognised as such, but should also cover support staff and others, such as ”citizen journalists”, bloggers, social media activists and human rights defenders, who use new media to reach a mass audience…

2. Support the adoption of legislation that provides adequate protection for whistleblowers and support reforms to give legal protection to journalists’ right of non-disclosure of sources…

3. The right to seek and receive information

The right to freedom of expression includes freedom to seek and receive information. It is a key component of democratic governance as the promotion of participatory decision-making processes is unattainable without adequate access to information. For example the exposure of human rights violations may, in some circumstances, be assisted by the disclosure of information held by State entities. Ensuring access to information can serve to promote justice and reparation, in particular after periods of grave violations of human rights. The UN Human Rights Council has emphasized that the public and individuals are entitled to have access, to the fullest extent practicable, to information regarding the actions and decision-making processes of their Government…

These are, put simply, ‘a free and independent press, including bloggers’; ‘protection for whistleblowers’; and ‘freedom of information’ — all of which are necessary to and in a democratic society.

Independent press

The UK seeks to curtail an independent press. It does this through threats (such as using the Leveson proposals against journalists and editors), abuse of the Terrorism Act (just as Obama abuses the Espionage Act), and pure and simple bullying.

Leveson

Example: When Guido Fawkes’ political blog scooped the mainstream press on the arrests of Max Clifford, Jim Davidson and Rolf Harris, Fawkes wrote,

No judge has ordered reporting restrictions in relation to Rolf Harris, no super-injunctions prevent the reporting of news concerning him, instead his lawyers Harbottle and Lewis are citing the Leveson Inquiry’s report in letters to editors of newspapers – cowing them into silence. The Leveson effect is real and curtailing the freedom of the press through fear.

Leveson Effect: Can You See What It Is Yet?

Terrorism Act

Example: David Miranda was arrested, detained at Heathrow, and had his computer equipment confiscated when he was merely passing through Heathrow on the way from Berlin to Brazil. To achieve this, the UK government had to classify him as a terrorist for possibly carrying Snowden files.

Bullying

Example: Government officials insisted on and oversaw the physical destruction of The Guardian’s hard disks that contained Snowden files.

Manning, Assange & Snowden – the 3 great whistleblowers of the modern age

Protection for whistleblowers

The three great whistleblowers of the modern age are Chelsea (Bradley) Manning, Julian Assange, and Edward Snowden. Manning is in prison and likely to stay there for many years to come; Assange has a European Arrest Warrant against him and is effectively imprisoned for life in the Ecuadorean Embassy in London; and the whole of Europe has refused to provide asylum to Snowden.

At the Stockholm Internet Forum set for the end of May, and hosted by the Swedish government,

.SE – the only non-governmental organization among the hosts – made a list of possible candidates. The most important name on it: Edward Snowden. Further names included journalists Glenn Greenwald and Laura Poitras, the two journalists that informed the world about the NSA’s activities, Guardian Editor in Chief Alan Rusbridger as well as hacker Jacob Appelbaum, who found the mobile phone number of German Chancellor Angela Merkel in Snowden’s database. The list of candidates was sent to the Swedish Foreign Ministry for approval.

Swedish Foreign Ministry prevents Snowden’s invitation

In the event, Carl Bildt’s foreign ministry vetoed all except Laura Poitras, who declined the invite because of the blacklist.

If the European Union was serious about protection for whistleblowers, it would provide protection for Assange and Snowden. For the former it is assisting the US attempts at getting him into the USA; and for the latter it is doing nothing to prevent it.

Freedom of information

This, says the EU, is a necessary ingredient for democracy — but denies it to its own people. In April, Dr Helen Wallace of GeneWatch announced

GeneWatch has spent 12 months battling to reveal documents showing extensive government contacts between the Department of Food, Environment and Rural Affairs (Defra) and the GM crop lobby crop the Agricultural Biotechnology Council (ABC).

“These partial documents strongly suggest the Government is colluding with the GM industry to manipulate the media, undermine access to GM-free-fed meat and dairy products and plot the return of GM crops to Britain”, said Dr Helen Wallace, Director of GeneWatch UK, “The public have a right to know what is going on behind closed doors”.

She was complaining about missing and redacted documents from the Department for Environment Food & Rural Affairs (DEFRA). Early in May she commented,

These documents expose Government collusion with the GM industry to agree PR messages and blacklist critical journalists. Scientists have been cherry-picked to push GM industry PR, as it seems the Government has made promises of research funds tied to public-private partnerships with Monsanto or Syngenta dependent on supporting commercial cultivation of RoundUp Ready GM crops in Britain. Disturbingly, the Government has also been kept in the loop over lobbying by GM feed importers behind closed doors to stop supermarkets offering their customers the choice of GM-free-fed meat and dairy products. British consumers have lost out to boost Monsanto’s profits, as more GM RoundUp Ready soya is shipped in for use in feed, harming the environment abroad.

In short, the UK government systematically denies information to the UK people where the democratic process might disturb its autocratic purposes. This is contrary to both the spirit and word of the EU’s freedom of expression guidelines.

The only realistic conclusion that can be drawn from the EU guidelines is that they are nothing other than propaganda designed to make European citizens believe that they live in a democracy. It wants the world to believe that it has high ideals over freedom of expression and access to information, but does little to ensure it within its own borders.

Andrew Weev Auernheimer freed on an important technicality

Just over one year ago, Andrew (Weev) Auernheimer was sentenced to 41 months in prison for downloading data that AT&T had left exposed on the internet. That data was the email addresses of more than 100,000 early iPad adopters; and was a major embarrassment for AT&T.

Perhaps because of the importance of AT&T to law enforcement; perhaps because of the celebrities and government officials included in the early adopters; the government prosecuted Weev under the Computer Fraud and Abuse Act.

The important point to remember is that Weev performed no hack, subverted no security defences — he merely downloaded (effectively by asking the site to give him…) the email addresses of AT&T customers. The implication of the government action against him is that any site could declare any data ‘prohibited’ after its download, and allow the government to prosecute anyone who had downloaded it.

It would also mean that much genuine and valuable security research — such as testing a website to see if it is vulnerable to the Heartbleed bug — and even the compilation of web search databases such as Google and Bing would be illegal.

Weev appealed his sentence, and one year and a bit later, on 10 April 2014, Third Circuit judges vacated the conviction.

The satisfactory outcome is that Weev has been freed from another government CFAA overreach. The unsatisfactory outcome is the cop-out manner in which it was done by the court.

The appeal was effectively over the misuse of the CFAA, and the location of the trial in New Jersey. Location is an important concept in US computer law. If the conviction had been allowed to stand, prosecutors would be able to cherry-pick from different state laws (as indeed they seem to have done with Weev) in order to maximise the penalty. But the law says that there must be a geographical connection between the crime and the prosecution.

In this instance Auernheimer was in Arkansas, his accomplice was in California, AT&T was in Texas with the server in Georgia — and Gawker (which published some of the email addresses downloaded by Weev) was in New York. But the government prosecuted him in New Jersey where state laws allowed a longer sentence.

Few people believe that Weev’s conviction and sentence was anything other than a miscarriage of justice. This view could have been upheld by the appeal court either on the misuse of the CFAA or the venue of the trial. It chose the latter because this meant it did not need to consider the former. The great news is that the conviction has been vacated; the disappointing news is that the CFAA itself has not been challenged and future overreach remains a distinct possibility.

BBC not available to Brits

Umm… We Brits fund the BBC through our license fees. But we cannot view this BBC page because we do not fund it. Rather it is funded by the BBC, not by us; and therefore we cannot view it.

I think that’s what it’s saying…

(Incidentally, the page in question is http://www.bbc.com/future/story/20130820-cyber-pearl-harbor-a-real-fear)

Demonstrating that anti-virus doesn’t just depend on up-to-date signatures

The granddaddy of security software is the venerable anti-virus. But the mother of all attacks is the zero-day targeted exploit. Vendors of new products specifically designed to protect against the latter continuously insinuate that anti-virus no longer works — ergo you need to buy their shiny new product to stay safe.

These vendors point out that the attacker merely needs to modify the malware to change its signature to instantly create a pseudo-0-day that defeats AV signature engines. And to prove their point, they will submit the pseudo or actual 0-day to VirusTotal to demonstrate that few if any AV products actually detect it.

This gives a false impression. VT basically just submits the sample to the signature engine — which won’t detect 0-days. But the AV industry long ago accepted that signatures alone are not enough, and built additional behavioural defences into their products. These are not generally tested by VT.

So when a VirusTotal report says a particular sample was not detected by your own AV software, that doesn’t necessarily mean that it would not be detected by the AV product’s behavioural methods in situ on your PC. It’s a difficult thing to prove, and it has left the anti-virus industry disadvantaged against the arguments of the newer products.

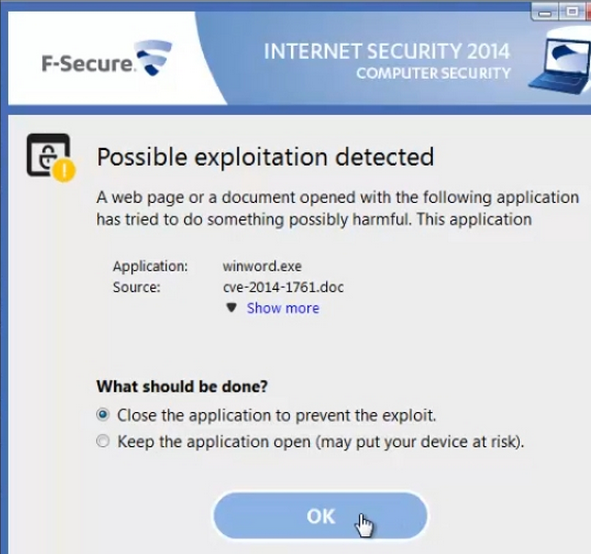

Now F-Secure has tested it. There is a new 0-day MS Word/RTF vulnerability that is expected to be fixed by Microsoft in this week’s Patch Tuesday patches. For the moment, it remains a 0-day.

“Now that we got our hands on a sample of the latest Word zero-day exploit (CVE-2014-1761),” reported Timo Hirvonen, senior researcher at F-Secure, yesterday, “we can finally address a frequently asked question: does F-Secure protect against this threat? To find out the answer, I opened the exploit on a system protected with F-Secure Internet Security 2014, and here is the result:

I would suggest that F-Secure is not the only AV software able to detect the worrying behaviour, if not the signature, of the 0-day without ever seeing the malware.

The reality is that no software can guarantee to stop all malware; but anti-virus software remains the bedrock of good security. Adding to it is prudent; replacing it is foolhardy.

That Mandiant sale thing

Working in security is guaranteed to do one thing – it makes you cynical and distrustful. Snowden has quantified and multiplied that distrust – so it is perfectly possible that my cynicism has more to do with personal paranoia. But…

Is Mandiant really worth $1 billion? That’s what FireEye paid for it. Not in cash, but in shares – and it would have been more had FireEye’s shares not fallen just prior to the announcement. Shares, incidentally, that FireEye only has because it went public less than four months ago. It couldn’t buy Mandiant with cash because FireEye has never yet turned a profit.

Mandiant is privately held, and the big winners in the acquisition will be Mr. Mandia, the company’s founder, Mr. DeWalt, who joined Mandiant’s board as chairman in 2012, and the company’s venture backers. Mandiant has raised $70 million from Kleiner Perkins Caufield & Byers, the venture capital firm, and One Equity Partners, an investment arm of JPMorgan Chase.

FireEye Computer Security Firm Acquires Mandiant: New York Times

But hang on a bit. Isn’t Mr DeWalt chairman of FireEye? Yes he is. On 23 June 2012 he said (here), “Greetings everyone – Dave DeWalt here, the new chairman of FireEye. I have to tell you I’m very excited to join this hot security company…”

But just over a month earlier he blogged about his happiness in becoming Mandiant’s chairman:

Now, for Dave DeWalt to be a ‘big winner’ in the sale of Mandiant, he has to have a percentage of that company – which would not be surprising for the chairman of that company. So what we have is a timeline of Mr DeWalt becoming chairman of Mandiant (and some time thereabouts receiving a percentage of or investing some money in ownership of Mandiant); that same Mr DeWalt becoming chairman of FireEye approximately six weeks later; and then the second Mr DeWalt buying the first Mr DeWalt’s part of Mandiant for a very large sum of money just 18 months later.

I have, of course, asked FireEye if Mr DeWalt was still a member of the board of Mandiant at the time of the purchase, and how much he made from the sale – but have not at the time of writing this received a reply.

Cryptocat — encryption can make you safe, or very very unsafe

One of the first rules of security is that you never use a product that employs any form of proprietary cryptography. And if a security guy then says ‘be careful’, you’d best be very very careful — no matter how many magazines or newspapers say the product is the real deal.

That’s what happened with Cryptocat which is a secure chat product that “could save your life and help overthrow your government,” according to Wired — it could “save lives, subvert governments and frustrate marketers.” Forbes said that it “establishes a secure, encrypted chat session that is not subject to commercial or government surveillance.” Sounds good.

But security folk weren’t so sure. “Since Cryptocat was first released,” warned Christopher Soghoian in July 2012, “security experts have criticized the web-based app, which is vulnerable to several attacks, some possible using automated tools.”

Patrick Ball expanded in August 2012:

CryptoCat is one of a whole class of applications that rely on what’s called “host-based security”… Unfortunately, these tools are subject to a well-known attack… but the short version is if you use one of these applications, your security depends entirely the security of the host. This means that in practice, CryptoCat is no more secure than Yahoo chat, and Hushmail is no more secure than Gmail. More generally, your security in a host-based encryption system is no better than having no crypto at all.

When It Comes to Human Rights, There Are No Online Security Shortcuts

Security professionals, then, were not surprised when last week Steve Thomas wrote about his DecryptoCat — which does what it says on the can: it cracks the keys that let you read the messages.

If you used group chat in Cryptocat from October 17th, 2011 to June 15th, 2013 assume your messages were compromised. Also if you or the person you are talking to has a version from that time span, then assume your messages are being compromised. Lastly I think everyone involved with Cryptocat are incompetent.

DecryptoCat

This is a big deal, because Cryptocat has been marketed towards dissidents operating in repressive regimes. As Soghoian wrote:

We also engage in risk compensation with security software. When we think our communications are secure, we are probably more likely to say things that we wouldn’t if our calls were going over a telephone like or via Facebook. However, if the security software people are using is in fact insecure, then the users of the software are put in danger.

Tech journalists: Stop hyping unproven security tools

Add to that the current revelations on the NSA/GCHQ mass surveillance, and our understanding from last week’s Snowden revelations that the NSA automatically and indefinitely retains encrypted messages, then we can say with pretty near certainty that if you have been using Cryptocat, at least the US and UK governments are aware of everything you said.

A collection of news items from the end of 2012

Briefly, towards the end of last year, I contributed a newsy column in the print version Infosecurity Magazine. The magazine has now kindly allowed me to post them here. There are eight items in total; viz,

False positives and the disposition matrix

Megaupload takedown – an unmitigated disaster?

Brace yourselves, Europe – the lawyers are coming

A strange spam variant with an exokernelized solution

What’s the main cause of movie piracy?

In deep space, no-one can see you surf

After Samsung, Apple turns its big patent guns on… a Polish grocer

Is your computer photochromatic?

Just in case you missed any of them…

Containment – a kettle by any other name…?

Kettling is an emotive issue. It is a police tactic used around the world to contain and limit protests. The theory is pretty good. If any area of the protest is over-heating, isolate it and separate it and allow it to fizzle out. But the practice is not so simple. Innocent bystanders can be caught. Human rights can be violated. And in the UK, it is illegal unless the police have genuine reason to believe it is necessary to prevent violence.

The Corporate Greed demonstration in London on Saturday 15 October could hardly be called a violent protest.

Earlier today protestors were peacefully prevented from gaining access to Paternoster Square, and there has been no major disorder.

Met Police statement: Update on protests in City of London

That suggests that kettling would be illegal. And indeed, according to the BBC, there was no kettling.

But police at the scene said a “kettling” technique had not been used and that protesters were free to leave the square.

Occupy London protests in financial district

But, admitted the Met

There is currently a containment at St Paul’s Churchyard to prevent breach of the peace. We will look to disperse anyone being held as soon as we can.

A containment officer is on the scene to make sure this process works effectively.

We will attempt to communicate with people within the containment area and will provide water and toilets for those being contained.

Those who are suspected of being involved in disorder may be questioned or arrested as they leave the containment.

That’s a kettle described by a PR man. But isn’t this part of the cause of the protests? The way we are fed half-truths and misleading information to keep us quiet?

Should we blame ACTA on Norton-style statistical manipulation?

Patrick Gray (Risky Business) has gone to town on statistics released by Norton. The claim is that cybercrime now costs us almost as much as the illicit drugs trade.

If the numbers are to be believed, these reports say, that means cybercrime costs us nearly as much as the global trade in illicit drugs. It’s a sensational claim and makes an awesome headline, but any way you slice or dice the numbers they just simply don’t stack up.

Norton’s cybercrime numbers don’t add up

Patrick points out that Norton’s figures include ‘indirect’ costs while the drug figures do not.

…But if you add the USD$114bn figure for direct cybercrime losses to the USD$274bn “time lost” figure, you wind up with a total just under the figure for drug sales (USD$402bn).

…Just think of the harm being inflicted on Mexico right now by the drug cartels, not to mention narco-related drama in countries like Afghanistan. Then there’s the money spent on the “War on Drugs,” keeping drug dealers in prison and the productive capacity society loses to all those dope-smoking young males glued to their PlayStation 3s.

What comes out of this post is the extent to which vested interests manipulate figures to suit themselves. It happens all the time and everywhere. It happens in politics and throughout industry. One of the most ridiculous, overbloated and absurd figures comes from the rightsholders to justify ACTA. They will take an area that has a high use of pirated goods, extrapolate that across the world, multiply that figure by the retail value of the goods concerned, and claim that they are losing the full amount to piracy. Firstly it uses an extrapolation based on ridiculously inflated assumptions, and then assumes that everybody using pirated goods would have paid the full amount if the ‘free’ version was unavailable.

The tragedy is that it has worked. ACTA is on the point of being signed. As far as Europe is concerned, this is undemocratic, secretive and illegal. As far as America is concerned,

The United States finds itself in a particularly bizarre situation – on the one hand, it claims that the Agreement is fully in line with domestic law while, on the other, it is reportedly not prepared to be bound by the Agreement and is treating the text as a non-binding “Executive Agreement.” The USA does, however, expect the other signatories of the Agreement to consider themselves legally bound.

Countries start signing ACTA, preparatory docs still secret

ENISA responds to my ‘rant’ on the Appstore Security report

Marnix Dekker, one of the authors of the ENISA report on Appstore Security, has responded to my post in its comments (Appstore security: a new report from ENISA) and I would like to thank him for doing so. It’s worth reading, and I reproduce it here in full:

As one of the authors, allow me to briefly reply to your comments.

First of all thank you for reviewing the paper, I appreciate the feedback. Rants can be very refreshing.

It is true that the lines of defense are not in anyway controversial and may seem obvious. We felt that there was the need to outline the different defenses that can be used, as most of the app stores and platforms are not very explicit about these defenses. This is confusing for consumers.

Allow me to comment on your criticism of the killswitch. I would like to note that we do not exclude that there are other (than military) settings where a killswitch is unwanted. Bare in mind that most of the users do not want to keep malware on their device. We even mention that an optout where appropriate should be offered.

About jails: We are not saying that jailbreaking should be illegal, or that consumers should have no means of using alternative appstores… only that this should not be made so easy as to allow drive-by download attacks (email+link, genuine looking appstore, install approval, click, infected).

Your alternative proposal, to hold appstores liable for software vulnerabilities, is really a legal solution. I think it is a very interesting subject, but (big disclaimer) I am not a legal expert:

Some issues with this:

- It would be easy to set up a rogue appstore, run it from some obscure country, fill it with some infected apps. It would also be relatively easy to trick users into installing from there. Your solution, to simply find a suspect, and a court to fine, sounds to me a bit complicated. Just think of all the extradition procedures, harmonisation of laws, etc. that would be needed. Let’s ignore rogue appstores in the sequel.

- If I look at other platforms/software I do not see many consumers being granted compensation by courts, nor do I see many software vendors being fined for selling/distributing flawed software. Now this could change in the future, but I think we should address security in the meantime as well.

- Secondly, judges usually start fining people when it is clear they have been negligent or had malicious intent. That requires some kind of definition/agreement of what are best practices and sufficient measures/defences.

- Another issue with liability is – I think – the following: Imagine the opensourcing of software to continue. Android, Linux, Openoffice, etc, are example of this trend: A couple of volunteers decide to solve a problem (text editing say) by writing some software routines (say openmoko)… they publish them free of charge and they disclaim that you should only use this software at your own risk. Would you think it is fair to still fine them for flaws? What I am trying to say is that there are numerous examples of free opensource software/apps/platforms, and that we still need to address security there as well. Do you agree that the liability solution would only work for commercial software/platforms? In that case, what do we do about the rest?

Looking forward to discuss with you – software liability is a fascination topic 🙂

Best, Marnix